AI agents are rewriting how organizations access their data. Text-to-SQL copilots, analytics Slackbots, agentic BI - every data platform team we talk to is building or evaluating some version of this. OpenAI and Meta have both published detailed accounts of building internal data agents at scale with impressive results.

The models are finally capable enough, but there’s a gap in the infrastructure that almost every team hits at the same point:

Agents can query your data, but they don't understand it.

Schema metadata tells an agent what tables and columns exist. It doesn't tell the agent what they mean, how they're typically used, how fresh the data is, or which join patterns produce correct results. That's the knowledge an experienced analyst carries, and it's exactly what agents are missing.

Without it, you get plausible-looking SQL that returns wrong answers. The data team ends up spending more time debugging agent outputs than they saved by building the agent.

Today we're launching the Ryft Context Layer: structured context for every table in your Iceberg lakehouse, generated in real-time, to unlock AI for every organization.

Context Has Always Been the Hard Part

The data industry has spent years trying to solve the context problem. Semantic layers promised to bridge the gap between raw data and business meaning by predefining metrics, dimensions, and joins. They work for structured BI use cases, but they require significant upfront modeling, and the rigid definitions don't reflect how data is actually used in practice. Data catalogs took a different angle: centralized documentation with lineage and governance workflows, but keeping that documentation accurate and complete has always been a manual effort that falls behind the moment a pipeline changes. Legacy approaches are quickly being deprecated as more AI native solutions are needed.

These tools were designed for a world where humans queried data through controlled interfaces. In that world, incomplete context was tolerable, because an analyst could fill in the gaps with institutional knowledge and experience.

That assumption breaks down with AI agents. When thousands of agents across an organization are querying your lakehouse, you can't rely on human intuition to compensate for missing context. Every agent needs the same depth of understanding, and it needs it programmatically, continuously, and at the scale of your entire lake. Manual documentation and upfront modeling simply can't keep pace.

Introducing the Lakehouse Context Layer

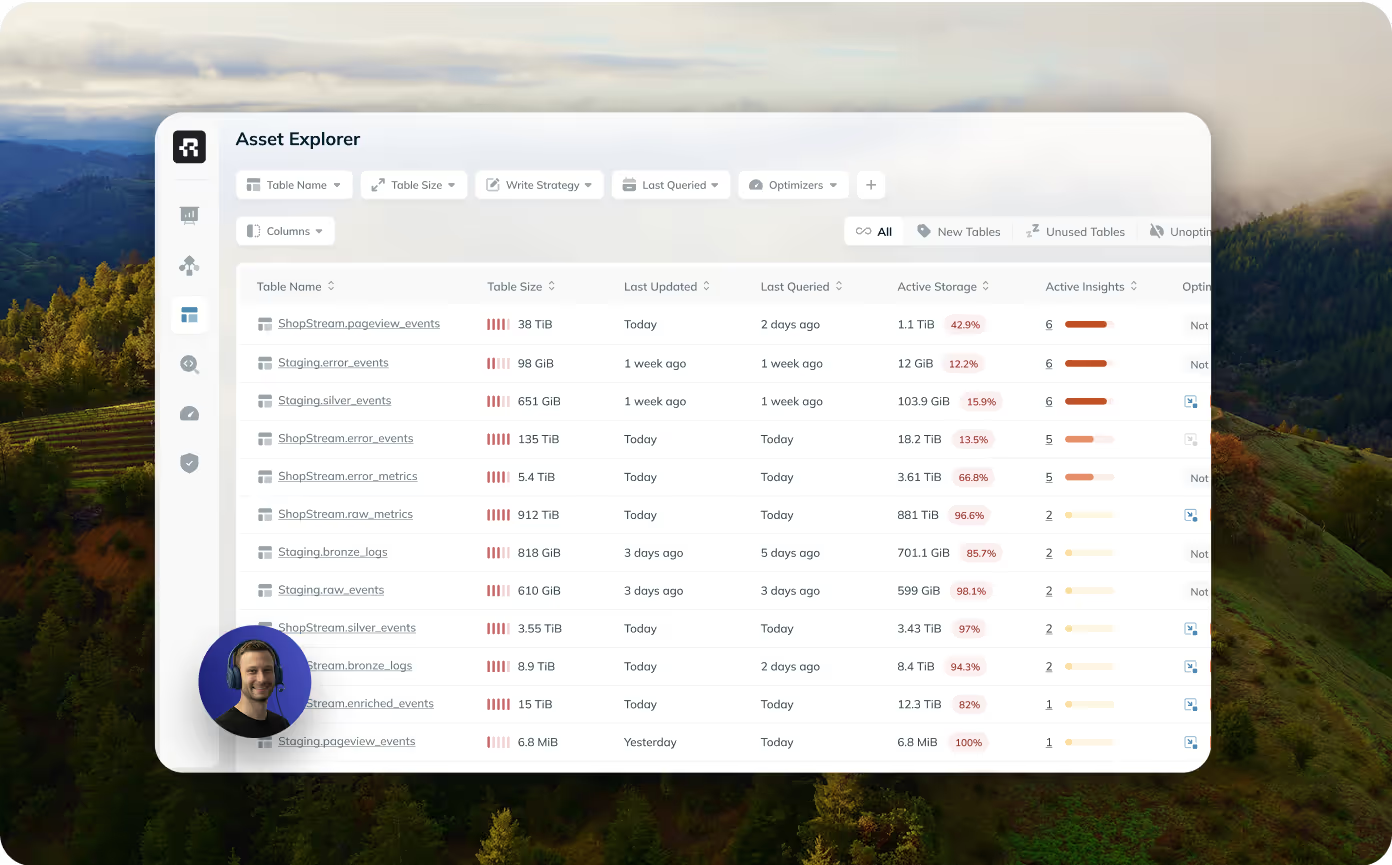

Ryft already monitors Iceberg lakehouses for optimization and observability. That means we already collect the signals that matter most for context: schema and structure, query patterns across every engine (Spark, Trino, Snowflake, Athena), write and ingestion behavior, freshness, and statistics. It's the same information a senior analyst would use to understand a table, captured in real time, at infrastructure scale.

The Lakehouse Context Layer combines these signals into rich, agent-readable context for every table. Instead of starting from a blank documentation page, your tables come with context that reflects how the data actually behaves: what gets queried, how it's joined, how often it's updated, what the common access patterns look like.

But operational signals don't capture everything. Business definitions, known data quirks, deprecation plans - that's institutional knowledge only your team has. OpenAI and Meta both found the same thing building their own data agents, and a16z recently argued the same: automated context gets you most of the way, but human input provides the final links that no pipeline can infer.

The Ryft Context Layer lets your team add that organizational context on top, and it takes priority wherever it's added. We made this design choice deliberately to automate the foundations, and combine it with what machines genuinely can't infer.

Context for Every Layer of Your Organization

Different teams ask different questions, and the context they need is different too. Data platform teams need operational context to run the lakehouse: which tables are hot, which are stale, what's the blast radius of a change. Data engineers need pipeline context: dependencies, freshness SLAs, join patterns, known quality issues. Analysts and business stakeholders - increasingly through AI agents - need semantic context: what does this column mean, which table is the source of truth, what filters are standard.

The Context Layer serves all three.

.png)

Built for Any Agent Framework

The Context Layer is exposed as an MCP server that any agent framework can connect to - whether you're building with Claude, Cursor, ChatGPT, open-source tools, or your own internal agents. Once connected, agents can discover relevant tables through semantic search, retrieve full context for specific tables, and find historical queries similar to a natural language question.

There's no new infrastructure to deploy and no data to move. If you're already using Ryft, you can start working with the context layer straight away. Reach out to your account manager for more details.

Want to see what structured context does for your agents? Book a demo →

Browse other blogs

Announcing the Ryft Context Layer

Ryft already monitors Iceberg lakehouses for optimization and observability. That means we already collect the signals that matter most for context: schema and structure, query patterns across every engine (Spark, Trino, Snowflake, Athena), write and ingestion behavior, freshness, and statistics. It's the same information a senior analyst would use to understand a table, captured in real time, at infrastructure scale.The Lakehouse Context Layer combines these signals into rich, agent-readable context for every table. Instead of starting from a blank documentation page, your tables come with context that reflects how the data actually behaves: what gets queried, how it's joined, how often it's updated, what the common access patterns look like.

.avif)

.avif)

.avif)